Latest Technology Trends in 2026

Technology doesn’t evolve in straight lines. It leaps, stalls, converges with something unexpected, and then suddenly every business scrambles to catch up. The trends shaping 2026 aren’t just incremental upgrades. They’re foundational shifts that change how companies build products, reach customers, and protect their operations. I’ve been tracking these shifts across client projects and my own products for over a decade, and the current moment feels different from anything before it.

What follows is a deep look at the technology trends actually reshaping industries right now. Not hype cycles or conference buzzwords, but developments that are changing how real businesses operate.

Generative AI and Applied AI

Artificial intelligence isn’t new. What’s new is how fast it went from “interesting experiment” to “mission-critical infrastructure.” Generative AI, the technology behind tools like ChatGPT and Claude, can now produce text, images, code, and video that’s genuinely useful in production workflows. Companies aren’t just experimenting anymore. They’re deploying AI across customer support, content creation, writing workflows, and software development.

The shift happened because large language models became accessible through APIs. You don’t need a research team to use AI anymore. A small business can plug into an API and automate tasks that used to require full-time employees. Content teams use AI to draft and refine. Sales teams use it to personalize outreach at scale. Developers use it to generate boilerplate code and debug faster.

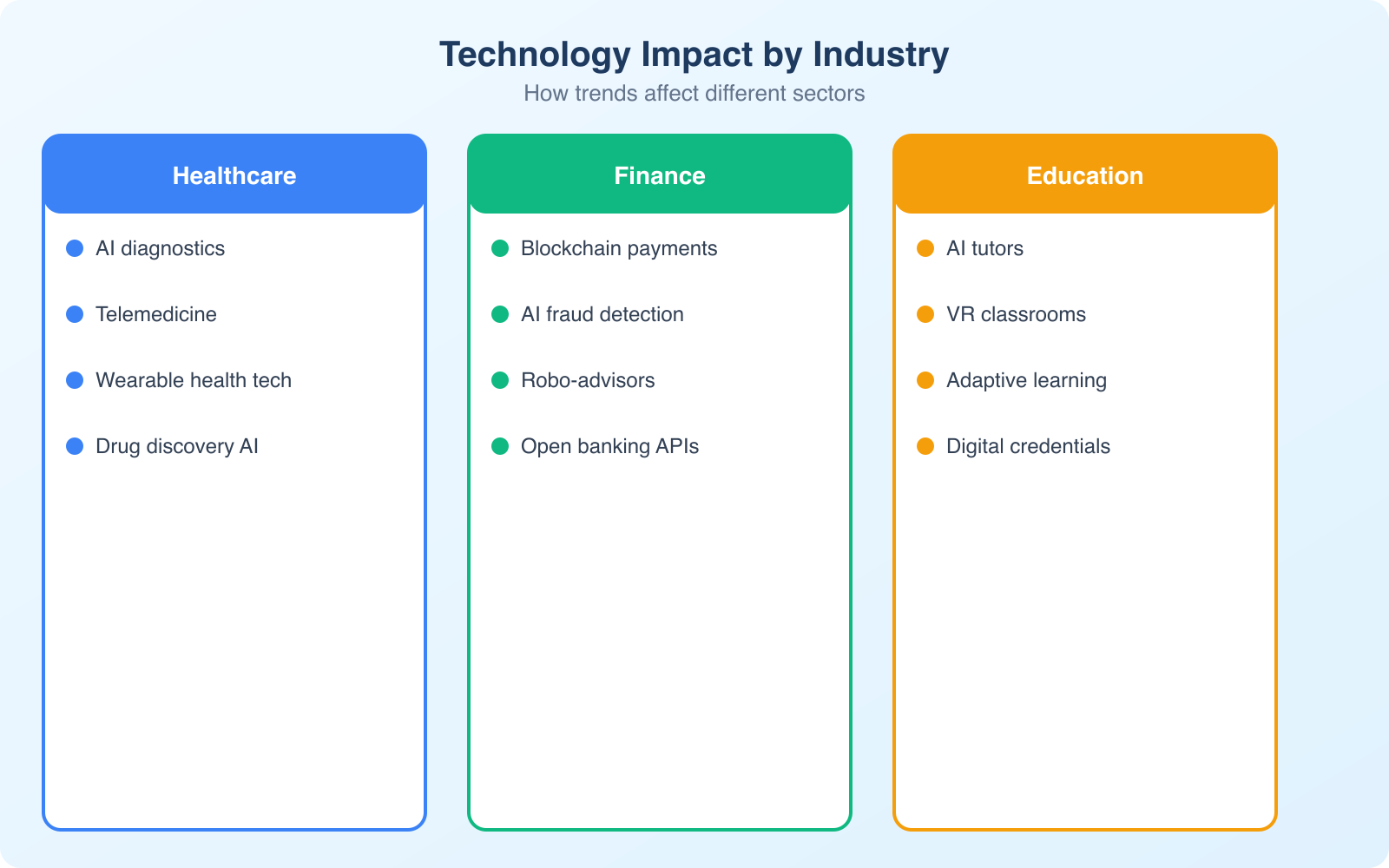

Applied AI goes beyond content generation. In manufacturing, AI-powered systems improve predictive maintenance and optimize supply chains. Healthcare uses AI for diagnostic tools and drug discovery. The precision these tools deliver wasn’t possible even three years ago. A diagnostic AI can now analyze medical images with accuracy rates that match specialist physicians in specific conditions.

The real game-changer is AI agents. These are systems that don’t just respond to prompts but take autonomous actions. An AI agent can monitor your website traffic, identify anomalies, and suggest fixes without human intervention. They can manage customer inquiries, schedule tasks, and coordinate between different software tools. This is where applied AI stops being a fancy search engine and starts becoming a digital teammate.

Don’t try to adopt every AI tool at once. Pick one workflow that’s costing you the most time, automate it with AI, measure the results, and then expand. The businesses getting the most from AI started small and iterated.

Edge Computing and Distributed Infrastructure

Cloud computing changed everything a decade ago. Now edge computing is changing the cloud. Instead of sending all data to centralized servers for processing, edge computing handles data closer to where it’s generated. This reduces latency, saves bandwidth, and makes real-time applications actually real-time.

Think about autonomous vehicles. A self-driving car can’t wait 200 milliseconds for a cloud server to process sensor data before deciding to brake. It needs local processing power. The same principle applies to manufacturing robots, smart retail systems, and IoT devices in agriculture. Edge computing brings the brain closer to the body.

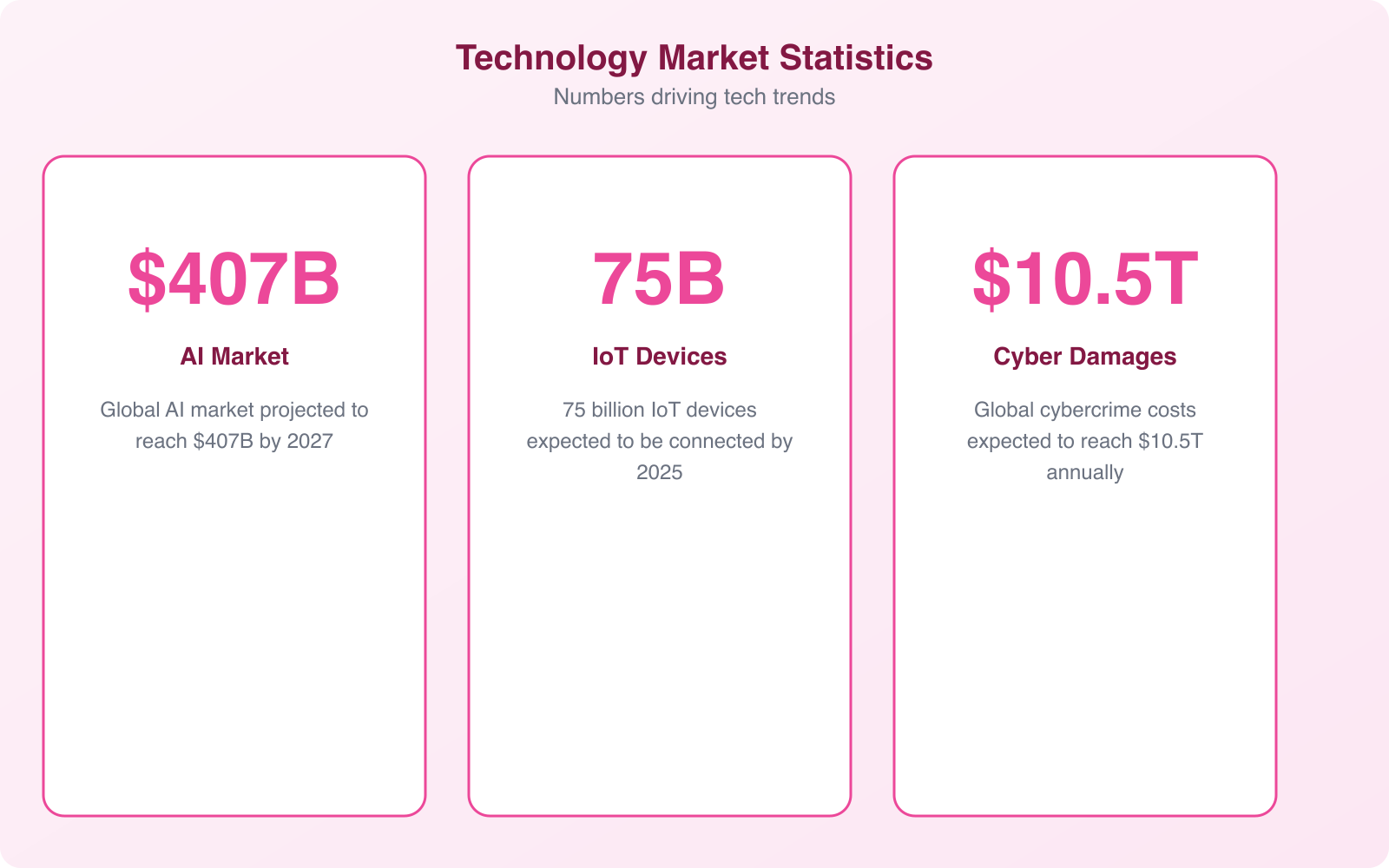

The market numbers tell the story. Edge computing spending crossed $200 billion globally, and analysts project it growing at 15-20% annually through the end of the decade. Companies like AWS, Microsoft Azure, and Google Cloud all launched dedicated edge computing services. Smaller players like Cloudflare and Fastly are building edge networks that let even small businesses run code at the network edge.

For website owners and developers, edge computing means faster page loads, better user experiences, and the ability to personalize content based on geographic location without adding latency. If you’re running an ecommerce store, edge computing can serve product pages from the nearest data center, reducing load times by 40-60% compared to a single origin server.

Quantum Computing Moves Toward Practical Use

Quantum computing has been “just around the corner” for years. But the corner is getting much closer. IBM’s quantum processors now exceed 1,000 qubits. Google demonstrated quantum supremacy. And practical applications are starting to emerge beyond the research lab.

The real promise isn’t speed for everyday tasks. Classical computers will always handle your spreadsheets faster. Quantum computing shines on problems that involve massive combinatorial complexity. Drug discovery, materials science, financial risk modeling, and supply chain optimization are all areas where quantum algorithms can explore solution spaces that classical computers simply can’t touch.

Pharmaceutical companies are using quantum computing to simulate molecular interactions. Instead of testing thousands of compounds in physical labs, they can model how a potential drug molecule interacts with target proteins. This cuts the discovery phase from years to months. JPMorgan Chase and Goldman Sachs are building quantum teams for portfolio optimization and fraud detection.

What does this mean for smaller businesses? Not much directly, at least not yet. But it matters indirectly. Quantum computing will break current encryption methods within the next decade. That means every business needs to start planning for post-quantum cryptography. The transition to quantum-safe encryption isn’t optional. It’s a matter of when, not if.

Cybersecurity Becomes a Board-Level Priority

Cybersecurity isn’t a tech department problem anymore. It’s a business survival issue. Ransomware attacks cost companies an average of $4.45 million per incident. Supply chain attacks compromised thousands of organizations through a single vendor. And regulatory penalties for data breaches keep climbing across every jurisdiction.

The trends in cybersecurity are shifting toward proactive defense. Zero-trust architecture, which assumes every user and device could be compromised, is becoming the default model. Instead of building a perimeter and trusting everything inside it, zero-trust verifies every access request regardless of where it originates.

AI-powered threat detection is another major shift. Security teams can’t manually review millions of log entries. AI systems can identify anomalous patterns, flag potential breaches in real-time, and even automate initial response actions. Companies using AI-based security tools detect breaches 100 days faster on average than those relying on traditional methods.

For businesses of all sizes, the practical takeaway is clear. Multi-factor authentication, encrypted communications, regular security audits, and employee training aren’t optional extras. They’re baseline requirements. The cost of prevention is always a fraction of the cost of recovery. If you haven’t reviewed your cybersecurity posture recently, now is the time.

Internet of Things Matures Beyond Smart Home Gadgets

The Internet of Things started with smart thermostats and connected light bulbs. In 2026, IoT has grown up. Industrial IoT (IIoT) is now a $200+ billion market, with sensors monitoring everything from factory equipment health to crop moisture levels in agricultural fields.

Smart cities use IoT sensors to manage traffic flow, reduce energy waste, and monitor air quality. A single city can have millions of connected sensors generating data that helps municipal planners make better decisions. Barcelona saved $58 million annually in water costs by deploying IoT-based irrigation systems in public parks.

In manufacturing, IoT-connected machines report their own health status. When a motor starts vibrating outside normal parameters, the system flags it for maintenance before it fails. This predictive maintenance approach reduces unplanned downtime by 30-50% and extends equipment lifespan significantly.

The convergence of IoT with AI and edge computing is where things get really interesting. A connected sensor doesn’t just collect data anymore. It processes data locally, makes decisions, and only sends relevant information to the cloud. This reduces bandwidth costs and enables real-time responses that pure cloud-based IoT systems can’t match.

For businesses evaluating IoT, the entry barriers have dropped substantially. Platforms like AWS IoT Core, Azure IoT Hub, and Google Cloud IoT make it possible to connect devices and build applications without deep embedded systems expertise. The hardware costs keep falling too. A basic IoT sensor module costs under $5 now, compared to $50+ just five years ago.

Blockchain Beyond Cryptocurrency

Blockchain suffered from overhype and then a severe credibility hangover. But the underlying technology, a distributed ledger that creates tamper-proof records, is finding genuine utility beyond speculative trading.

Supply chain transparency is the clearest use case. Walmart uses blockchain to trace food products from farm to shelf in seconds instead of days. If there’s a contamination issue, they can identify the source and pull affected products from stores within minutes. Maersk’s TradeLens platform used blockchain to digitize shipping documentation, reducing processing time by 40%.

Decentralized finance (DeFi) continues evolving, offering lending, borrowing, and trading without traditional intermediaries. Smart contracts execute automatically when conditions are met, eliminating the need for middlemen in many financial transactions. The total value locked in DeFi protocols fluctuates, but the infrastructure keeps maturing.

Digital identity is another promising application. Instead of sharing your actual data with every service you sign up for, blockchain-based identity systems let you prove claims about yourself (age, residency, employment) without revealing the underlying information. Estonia’s e-Residency program demonstrates this at a national scale.

Blockchain technology and cryptocurrency are related but distinct. You can use blockchain for supply chain management, digital identity, and record-keeping without any involvement in speculative crypto trading. Evaluate the technology on its own merits for your specific use case.

Connectivity: 5G Expansion and Early 6G Research

5G networks are still rolling out globally, but the impact is already measurable. Download speeds of 1-10 Gbps, latency under 10 milliseconds, and the ability to connect up to a million devices per square kilometer. These numbers enable applications that simply weren’t possible on 4G, from remote surgery to real-time industrial automation.

The real 5G story isn’t about faster phones. It’s about enabling IoT at scale, powering edge computing deployments, and making cloud gaming viable. Fixed wireless access (FWA) over 5G is bringing broadband-quality internet to rural areas where laying fiber isn’t economically feasible. T-Mobile’s home internet service serves over 5 million customers this way.

Meanwhile, research labs are already working on 6G, expected around 2030. Early concepts include reconfigurable intelligent surfaces (RIS) that can manipulate electromagnetic waves to optimize wireless coverage. Imagine surfaces in buildings and vehicles that actively shape how wireless signals travel, eliminating dead zones and improving energy efficiency.

6G promises terahertz frequencies, data speeds up to 100 Gbps, and microsecond latency. That would enable holographic communications, fully immersive virtual environments, and digital twin simulations that run in perfect synchronization with their physical counterparts. It’s early days, but the research is well-funded and progressing faster than 5G did at the same stage.

Sustainable Technology and Green Computing

The tech industry’s energy consumption is staggering. Data centers alone consume about 1-2% of global electricity. Training a single large AI model can emit as much carbon as five cars over their entire lifetimes. As the industry grows, sustainability isn’t just an ethical choice. It’s becoming a regulatory and economic requirement.

Green computing initiatives are accelerating across every major tech company. Google claims to run on 100% renewable energy. Microsoft pledged to be carbon-negative by 2030. Amazon is building massive solar and wind farms to power AWS data centers. But beyond the corporate pledges, practical innovations are making real differences.

Liquid cooling systems for data centers reduce energy consumption by 30-40% compared to traditional air cooling. More efficient chip architectures, like ARM-based processors in Apple’s M-series, deliver better performance per watt than their x86 predecessors. And new materials science breakthroughs, like elastocaloric materials for heating and cooling, could replace energy-hungry HVAC systems with far more efficient alternatives.

For businesses, the practical angle is straightforward. Energy-efficient infrastructure costs less to operate. Cloud providers are offering “green zones” where you can run workloads on renewable energy at competitive prices. Choosing sustainable technology isn’t just good PR. It directly impacts your operating costs as energy prices remain volatile.

Automation and Robotic Process Automation

Automation technology has moved far beyond factory robots. Robotic Process Automation (RPA) handles repetitive digital tasks like data entry, invoice processing, and report generation. Combined with AI, these tools can now handle unstructured data, make decisions based on context, and learn from corrections.

The numbers are compelling. Companies implementing RPA report 25-50% cost reduction in targeted processes. An insurance company that automated claims processing reduced handling time from 12 days to 3 hours. A logistics firm that automated shipment tracking eliminated 80% of manual data entry errors.

Hyperautomation, the combination of RPA with AI, machine learning, and process mining, is the next frontier. Instead of automating individual tasks, companies are automating entire workflows from end to end. Process mining tools analyze how work actually flows through an organization, identify bottlenecks, and suggest which processes to automate first for maximum impact.

The tools have also become more accessible. Platforms like UiPath, Automation Anywhere, and Microsoft Power Automate offer low-code interfaces that let business users build their own automations without writing code. A marketing manager can automate their reporting workflow. An HR coordinator can automate onboarding tasks. The barrier between “I wish this was automated” and “I automated it myself” has never been lower.

Immersive Technology: AR, VR, and Spatial Computing

The metaverse hype has cooled, but the underlying technology keeps advancing. Apple’s Vision Pro brought spatial computing to consumer attention. Meta continues investing billions in VR hardware and software. And the real growth is happening in enterprise applications where immersive technology solves actual problems.

Architecture and construction firms use VR to walk clients through buildings before a single brick is laid. Engineers use AR overlays to see hidden infrastructure (pipes, wiring, structural supports) while working on-site. Medical schools use VR simulations for surgical training that’s far more effective than watching videos or practicing on cadavers.

The training market is particularly promising. Walmart trained over 1 million employees using VR, covering everything from customer service scenarios to active shooter preparedness. VR training improves knowledge retention by 75% compared to traditional methods, according to PwC research. And it’s 4x faster than classroom training.

Spatial computing, the broader category that encompasses AR, VR, and mixed reality, is creating new interaction paradigms. Instead of clicking buttons on a flat screen, you manipulate 3D objects with your hands. Instead of reading documentation, you see step-by-step instructions overlaid on the actual equipment you’re servicing. This isn’t science fiction anymore. It’s happening in warehouses, hospitals, and factories right now.

AI-Driven Scientific Discovery

One of the most exciting and underappreciated technology trends is AI’s role in accelerating scientific research. AlphaFold predicted the 3D structure of virtually every known protein, a task that would have taken human researchers decades. This single breakthrough has implications across medicine, agriculture, and materials science.

AI is discovering new antibiotics by screening millions of chemical compounds in hours instead of years. It’s identifying new materials for batteries and solar cells by predicting their properties before they’re synthesized. Climate scientists use AI models to improve weather prediction accuracy and model long-term climate scenarios with greater precision.

Digital twins, virtual replicas of physical systems, are another intersection of AI and science. Engineers create digital twins of factories, power grids, and even entire cities. They run simulations on these virtual models to test changes, predict failures, and optimize operations before applying anything to the real world. A digital twin of a wind farm can predict when each turbine will need maintenance and adjust power output to maximize efficiency.

Synthetic data generation is solving one of research’s biggest challenges: accessing enough data while protecting privacy. AI can generate realistic but artificial datasets that preserve the statistical properties of real data without exposing any individual’s information. This enables research collaboration across organizations and borders that privacy regulations would otherwise prevent.

How Businesses Should Approach These Trends

Technology trends are only useful if you can translate them into business decisions. Here’s a practical framework for evaluating which trends matter to your specific situation.

First, assess the maturity level. AI and automation are production-ready right now. You can implement them today and see results within weeks. Quantum computing and 6G are interesting but won’t impact your operations for years. Prioritize based on where the technology actually sits, not where conference speakers claim it’s heading.

Second, look for convergence points. The most powerful opportunities emerge where trends overlap. AI plus IoT gives you predictive maintenance. AI plus edge computing gives you real-time personalization. Blockchain plus IoT gives you supply chain transparency. These combinations often deliver more value than any single technology alone.

Third, start with a specific problem, not a technology. “We should use AI” is not a strategy. “We need to reduce customer support response time from 4 hours to 15 minutes” is a problem that AI might solve. Define the problem first, then evaluate which technology fits best.

Fourth, budget for learning, not just deployment. Technology adoption requires training, process changes, and organizational adjustment. The companies that succeed with new technology allocate 30-40% of their technology budget to change management and training, not just software licenses and hardware.

The technology landscape of 2026 rewards companies that are strategic, not reactive. Don’t chase every trend. Pick the ones that solve your actual problems, start small, measure results, and scale what works. That’s how you turn technology trends into competitive advantage.

Frequently Asked Questions

What is the most impactful technology trend right now?

Generative AI is the most impactful trend right now, touching every industry from healthcare to marketing to software development. It’s not just chatbots. AI is being embedded into existing tools and workflows, creating a new category of AI-augmented products. The second most impactful trend is cybersecurity evolution, as AI-powered threats require AI-powered defenses. Both trends are creating massive business opportunities and workforce changes simultaneously.

How will quantum computing affect small businesses?

For most small businesses, quantum computing won’t have a direct impact for 5-10 years. Current quantum computers are still in the research and early commercial phase. However, the cybersecurity implications are real: quantum computing will eventually break current encryption methods. Start planning by adopting quantum-resistant encryption standards as they become available. For now, focus on AI and edge computing trends that deliver immediate business value rather than investing in quantum readiness.

Which technology trends should I invest in first?

Start with AI integration into your existing workflows. This delivers the fastest ROI with the lowest risk. Use AI tools for content creation, customer support automation, data analysis, and process optimization. Next, invest in cybersecurity upgrades because AI-powered threats are increasing. Edge computing and IoT investments make sense for businesses with physical operations. Don’t chase every trend. Pick 1-2 technologies that directly solve problems you’re facing today.

Is blockchain technology still relevant in 2026?

Blockchain has moved past the hype cycle and found legitimate use cases in supply chain management, digital identity verification, and decentralized finance. However, for most small businesses, blockchain isn’t a priority. It’s most relevant for businesses dealing with supply chain transparency, cross-border payments, or digital asset management. NFTs have mostly cooled down, but the underlying distributed ledger technology continues to mature. Don’t invest in blockchain unless you have a specific problem it solves better than traditional solutions.

How can I keep up with rapidly changing technology trends?

Follow a curated set of sources rather than trying to read everything. Subscribe to newsletters like TLDR Tech, The Pragmatic Engineer, and Benedict Evans’ newsletter. Listen to podcasts like a16z and The Vergecast during commutes. Set aside 30 minutes weekly for tech reading. Focus on trends relevant to your industry rather than every emerging technology. Join one professional community where practitioners discuss real implementations, not just headlines. The goal is staying informed enough to make smart decisions, not becoming a tech futurist.