The User’s Checklist: Ten Signals of a Trustworthy Software Review

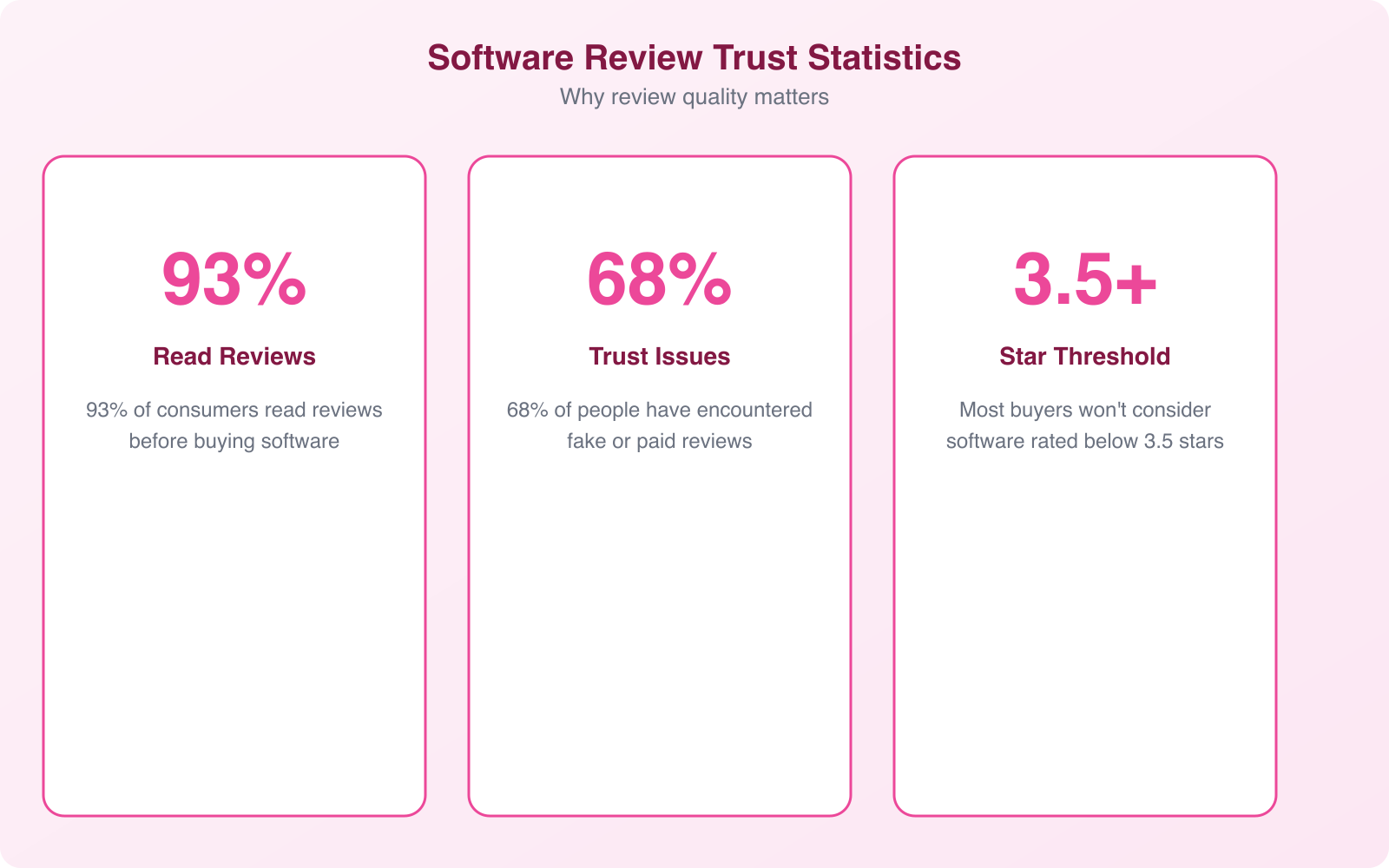

I’ve written hundreds of software reviews and read thousands more. The gap between a genuinely helpful review and a glorified sales pitch is enormous, but most readers can’t tell the difference until they’ve already wasted money on bad software. Fake reviews, AI-generated fluff, and paid endorsements disguised as honest opinions have made the landscape worse than ever.

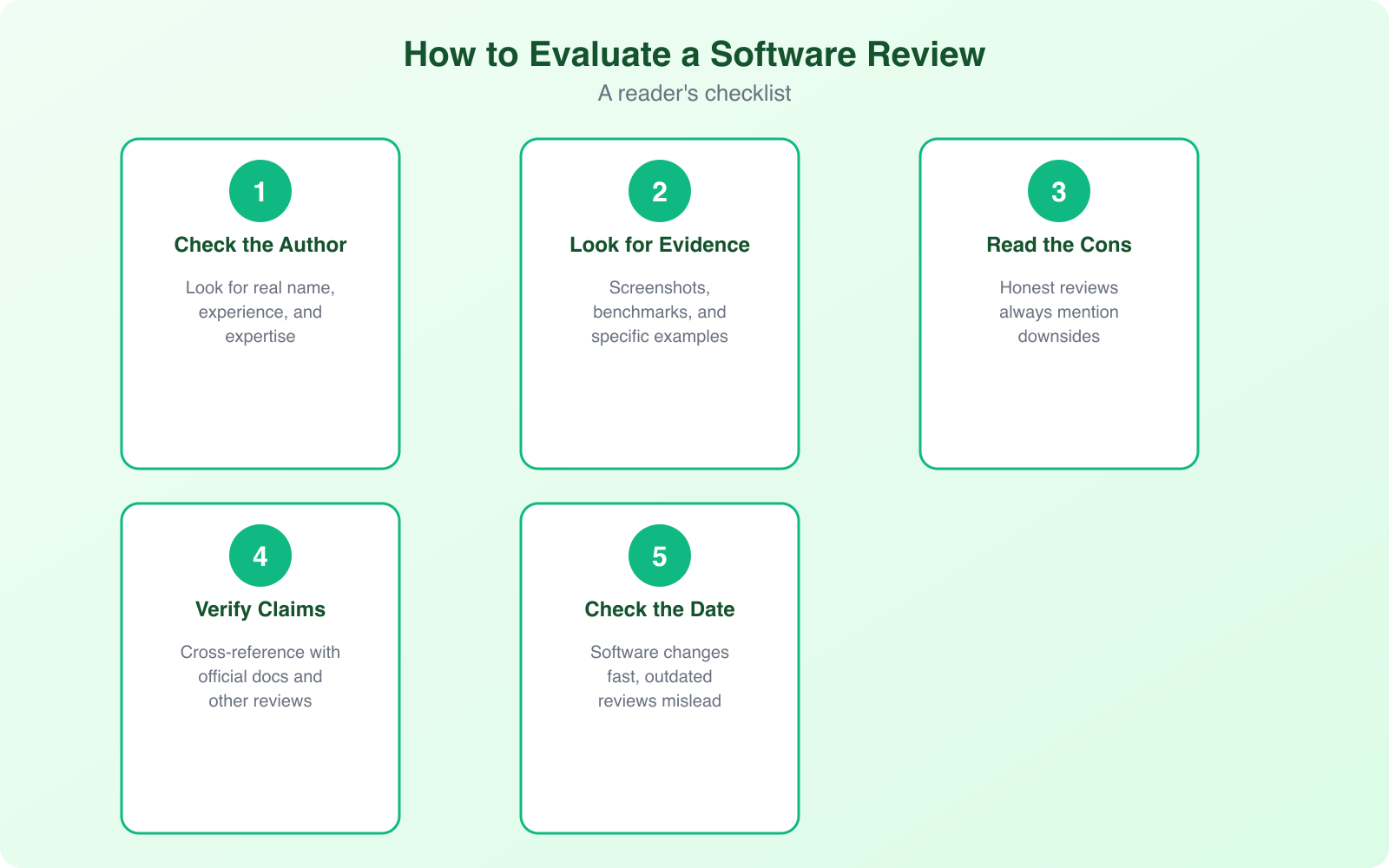

Here are 10 signals I look for when evaluating whether a software review is worth trusting. Use this as your personal checklist before you take any review’s recommendation seriously.

Real Experience With the Product

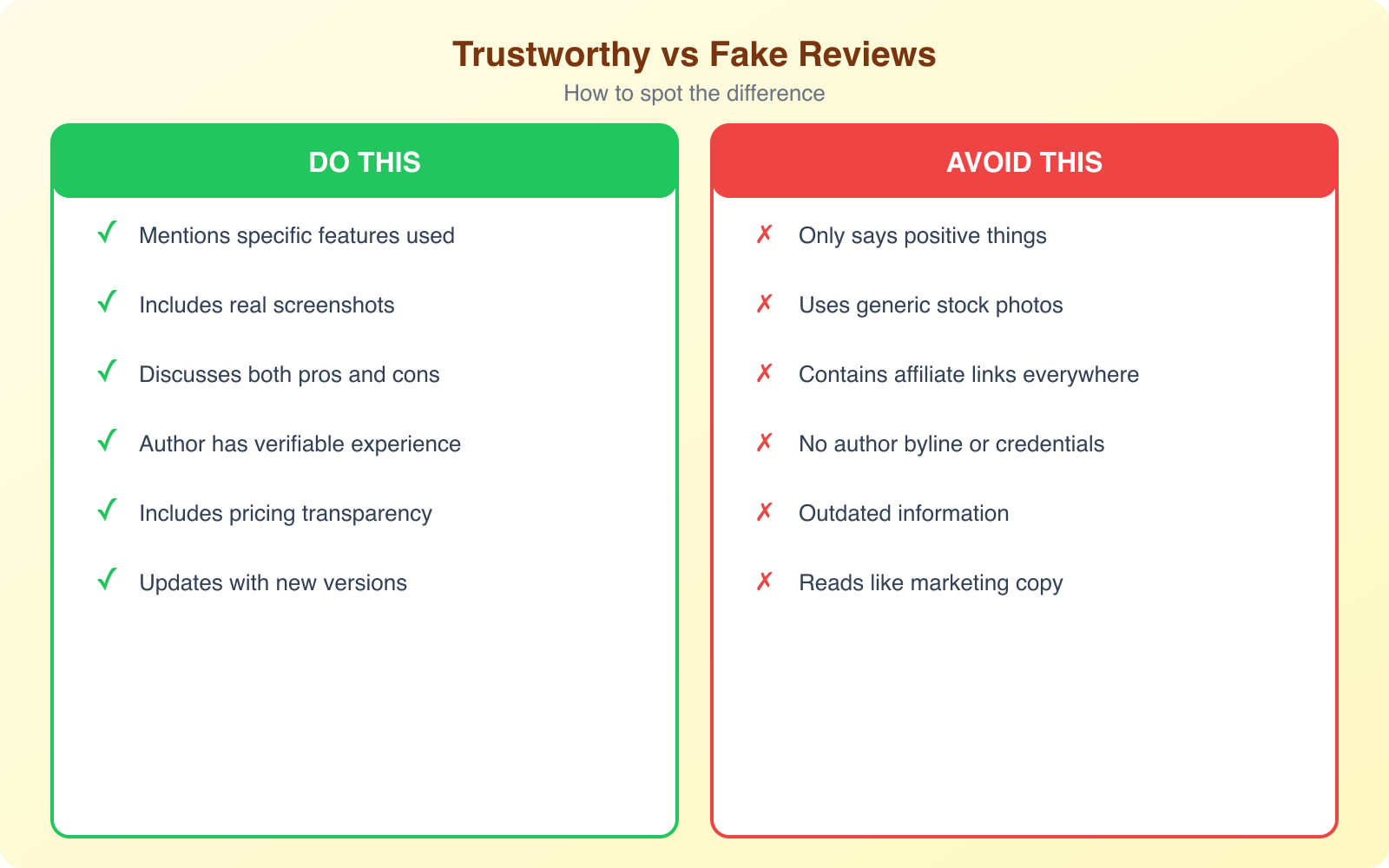

This sounds obvious, but you’d be amazed how many “reviewers” never actually use the product. They scrape feature lists from the company’s website, rewrite the marketing copy, and call it a review. I’ve seen this pattern repeatedly across affiliate sites, comparison blogs, and even some well-known publications.

A trustworthy review includes details that only come from hands-on use. Things like “the import wizard crashed twice when I tried uploading a CSV with 10,000 contacts” or “the mobile app takes 4-5 seconds to load on my Pixel 8.” These specifics can’t be faked easily.

Look for screenshots of the actual interface, not stock photos or marketing images. Real reviewers show their dashboard, their settings page, their test data. The review should read like someone sat down, used the product for at least a week (ideally longer), and documented what happened.

Professional review sites in regulated industries actually rely on this standard heavily. The entire industry depends on reviewers who test products in real conditions and document their findings transparently.

Clear Purpose and Direct Focus

A trustworthy review starts by telling you exactly what it covers and who it’s for. “This review evaluates Notion’s project management features for small teams of 5-15 people” is infinitely more useful than “Let’s look at Notion.”

Focus matters because software serves different audiences differently. A CRM that works perfectly for a 3-person startup might be completely wrong for a 200-person sales team. Good reviewers understand their readers’ reading habits and expectations and frame the review accordingly.

When a review tries to cover everything about a product in one article, it usually covers nothing well. Depth beats breadth in reviews. I’d rather read 2,000 words on how a tool handles one specific workflow than 5,000 words that skim every feature.

Honesty About Strengths and Weaknesses

This is the single biggest tell. If a review has zero negative things to say, it’s an advertisement, not a review. Every product has weaknesses. Every single one. Even the best software I’ve ever used had annoying quirks, missing features, or pricing that didn’t make sense for certain use cases.

A trustworthy reviewer isn’t afraid to say “the mobile app is clunky” or “the pricing jumps dramatically from the Basic to Pro plan” or “customer support took 3 days to respond to my ticket.” These honest observations give readers the information they need to decide whether those weaknesses matter for their specific situation.

One person might care deeply about mobile performance. Another might never use the app on their phone. Honest reporting lets each reader weigh the tradeoffs based on their own priorities.

Count the number of negative points versus positive points. A trustworthy review usually has a ratio of roughly 3:1 or 4:1 positive to negative. All positive means advertising. Equal parts means the reviewer probably shouldn’t be recommending the product at all.

A Fair and Balanced Tone

Tone reveals bias faster than content does. A review that uses breathless excitement (“This is THE BEST email tool EVER!”) or hostile dismissal (“This garbage software should be shut down”) isn’t giving you information. It’s performing an emotion.

Fair tone treats positive and negative observations with the same level of care and specificity. It doesn’t oversell the good parts or dramatize the bad parts. You should feel like you’re getting a balanced briefing from someone who wants to help you make a good decision, not someone who wants to convince you of a predetermined conclusion.

Watch for subtle manipulation too. Phrases like “unlike inferior alternatives” or “the only tool that actually works” are red flags. Good reviewers present facts and let you draw conclusions.

Specific Descriptions With Real Examples

Vague reviews waste your time. “The interface is intuitive” tells you nothing. “I was able to set up a 5-step email automation sequence in 12 minutes without reading any documentation” tells you everything.

Trustworthy reviews use specific moments from actual usage to describe the experience. A feature that loads in 1.2 seconds, a report builder that crashed when filtering more than 10,000 records, a customer support chat that resolved an issue in 8 minutes. These concrete details create a picture of what using the software actually feels like.

When a reviewer uses details pulled from active testing, you can picture your own experience more clearly. The review transforms from opinion to documented experience.

Clean Writing and Honest Structure

How a review is written says as much as what it says. Clean, direct language shows confidence and expertise. Marketing-speak (“revolutionary paradigm-shifting solution”) shows that someone is selling, not reviewing.

Good structure matters too. A review should follow a logical flow: what the product does, who it’s for, how it performs in practice, what it costs, what the alternatives are, and who should (and shouldn’t) buy it. If the structure feels random or repetitive, the reviewer probably didn’t plan the piece carefully, which suggests they didn’t evaluate the product carefully either.

Short sentences work during technical explanations. Longer ones work for broader context. The balance should feel natural, not forced. If a review reads like it was generated by AI and lightly edited, it probably was.

Current and Up-to-Date Information

Software changes constantly. A review written about a product’s 2023 version is potentially useless for someone evaluating the 2026 version. Features get added, removed, or completely redesigned. Pricing changes. Entire product categories shift.

Check the publication date and any “last updated” timestamps. Trustworthy review sites update their content when significant changes happen. If a review references features that no longer exist or pricing that changed 2 years ago, it wasn’t maintained properly.

Screenshots are another timestamp clue. If the UI in the screenshots looks completely different from the current product, the review is outdated.

Transparent Testing Conditions

A reliable review explains the environment where the software was tested. This seems like a small detail, but it matters enormously. A project management tool that runs smoothly on a MacBook Pro with 32GB of RAM might lag terribly on a budget Chromebook. A CRM that handles 500 contacts effortlessly might buckle under 50,000.

Look for details like: what device was used, how long the testing period lasted, what size team or dataset was involved, and whether the reviewer tested the free plan or a paid tier. These context clues help you determine whether the reviewer’s experience is relevant to yours.

Affiliate and Sponsorship Disclosure

This one’s non-negotiable. Any trustworthy reviewer discloses their financial relationship with the products they review. Affiliate links aren’t inherently bad. I use them myself. But readers deserve to know when a review might be influenced by commission structures.

The FTC requires disclosure in the United States, and similar regulations exist in the EU and UK. If a review site doesn’t have a visible disclosure statement, that’s a red flag about their overall editorial standards.

Disclosure doesn’t disqualify a review from being trustworthy. What matters is whether the affiliate relationship influences the recommendation. A reviewer who writes honest reviews with affiliate links is more trustworthy than a reviewer who writes dishonest reviews without them.

Consistency Across Multiple Reviews

A single review can be misleading. The real test of a reviewer’s trustworthiness is their body of work. Do they apply the same standards across different products? Do they use the same criteria for rating? Are their recommendations consistent with their stated values?

If a reviewer rates every product 9/10 or higher, their ratings are meaningless. If they recommend completely different “best” products in the same category within the same month, something’s off. Consistency shows that the reviewer has a methodology, not just an opinion for whoever pays the most.

Read at least 3-4 reviews from the same author or publication before trusting their recommendation. Patterns emerge quickly. Consistent quality signals reliability. Inconsistent quality signals that editorial standards vary by advertiser.

How to Spot AI-Generated Reviews

With AI writing tools becoming widespread, the review landscape is flooding with machine-generated content that’s never touched an actual product. Here’s how to spot it:

- Generic language: AI reviews tend to use the same phrases repeatedly. “Seamless experience,” “robust features,” and “intuitive interface” appear in every paragraph without specifics.

- No original screenshots: AI can’t take screenshots. If every image is a stock photo or pulled from the product’s marketing materials, be skeptical.

- Perfect structure, zero personality: AI writes in predictable patterns. Real reviewers have quirks, preferences, and occasional tangents that feel human.

- Absence of specific numbers: “The software is fast” versus “Pages loaded in 1.8 seconds on my test.” AI defaults to vague claims.

- No mention of bugs or limitations: AI tends to generate positive content unless specifically prompted otherwise. An all-positive review is suspect.

The presence of AI-generated content doesn’t automatically mean a review is bad. But it should make you more cautious. A good review site uses AI as a writing aid while ensuring human testing and editorial oversight remain at the core.

What Makes a Review Worth Your Time

These 10 signals work together. A review might nail 8 out of 10 and still be worth trusting. But if it fails on the fundamentals, specifically real experience, honesty about weaknesses, and transparent disclosures, walk away and find a better source.

Your time is valuable. Reading a bad review doesn’t just waste 10 minutes. It can lead you to buy the wrong software, which wastes months of setup time, training costs, and potentially locks you into annual contracts. The 5 minutes you spend evaluating whether a review is trustworthy can save you thousands of dollars and countless hours of frustration.

When you find reviewers who consistently meet these standards, bookmark them. Follow them. They’re a rare and valuable resource in a landscape cluttered with noise. And when you’re reading reviews on this site, hold me to the same standards. That’s how trust works.

Frequently Asked Questions

How can I tell if a software review is paid or sponsored?

Look for disclosure statements near the top or bottom of the review. Phrases like ‘sponsored,’ ‘partner,’ or ‘affiliate’ indicate a financial relationship. Also check if the review site only reviews products from one company or always gives the same brand the top spot. Consistent bias toward one brand across many reviews is a strong indicator of sponsorship.

Are user reviews on app stores more trustworthy than professional reviews?

Not necessarily. App store reviews suffer from review bombing (competitors posting fake negatives) and incentivized reviews (companies offering discounts for 5-star ratings). Professional reviews offer more depth and methodology. The best approach is to read both: professional reviews for detailed analysis and user reviews for patterns across many experiences.

How many reviews should I read before buying software?

At least 3-4 reviews from different sources. Look for consensus on strengths and weaknesses. If multiple independent reviewers mention the same problem, it’s likely real. If only one review mentions a specific flaw and others don’t, it might be an edge case or outdated information.

Can AI-generated reviews still be useful?

AI-generated reviews that compile publicly available information can serve as a starting point for research, but they can’t replace hands-on testing. They’re useful for getting a quick feature overview or understanding general pricing structures. For actual purchase decisions, you need reviews from people who’ve used the product in real workflows.

What’s the most important signal of a trustworthy review?

Honesty about weaknesses. Every product has flaws, and a review that mentions none is either uninformed or dishonest. A reviewer who openly discusses what the product does poorly earns more trust than one who only highlights strengths, even if everything else about the review is professionally written.