NVIDIA Wants to Put Servers in Space. Here’s Why It Might Work

NVIDIA’s biggest problem isn’t building faster GPUs. It’s finding enough electricity to run them.

AI training clusters now consume more power than small cities. The H100 alone draws 700 watts per chip, and a single DGX H100 rack pulls over 10 kW. Scale that to the tens of thousands of GPUs that companies like Meta, Google, and OpenAI are deploying, and you’re looking at power demands measured in gigawatts. Grid interconnect queues in the US are backed up 4-5 years. Transformer shortages are real. Community pushback against new data center construction is growing louder every quarter.

So NVIDIA is backing a solution that sounds like science fiction but has actual hardware in orbit right now: put the servers in space.

Why orbit makes engineering sense for AI computing

The argument for space-based computing comes down to three physics problems that Earth can’t easily solve: power generation, heat dissipation, and land constraints.

In low Earth orbit (LEO), solar panels receive about 1,361 watts per square meter of unfiltered sunlight. No atmosphere to block it. No weather. No night cycles if you position the constellation correctly. Compare that to terrestrial solar, which averages 150-300 W/m² after you account for clouds, atmospheric absorption, and nighttime. Orbital solar is roughly 5-8x more efficient per unit area.

Then there’s cooling. Every data center on Earth fights thermodynamics. You generate heat, and you need to move it somewhere. That costs energy, sometimes 30-40% of a facility’s total power consumption goes to cooling alone. In the vacuum of space? Heat radiates directly into the cosmic background at 2.7 Kelvin. No chillers, no cooling towers, no water consumption. You still need heat pipes and radiator panels, but the physics works in your favor instead of against you.

And land? There’s no zoning board in orbit. No NIMBY protests. No 18-month permitting cycles. This matters more than it sounds. Microsoft, Amazon, and Google are all competing for the same limited set of locations with sufficient grid capacity. Some sites are booked out through the end of this decade.

What NVIDIA and Starcloud are actually building

Starcloud is the company making this real. Through NVIDIA’s Inception program, they’ve already launched a refrigerator-sized satellite carrying an NVIDIA GPU to orbit as a test platform. Not a concept render. Not a whitepaper. Actual hardware, running computations in space.

The plan is to put NVIDIA H100-class accelerators in orbit, with early, limited customer access targeted for mid-decade if testing milestones hold. Think of it as a cloud region that happens to be 400 km above your head instead of 400 km down the highway.

Starcloud isn’t alone. Multiple companies are now sketching solar-powered AI satellite constellations, arguing that formation-flying clusters of compute nodes could deliver several times more energy efficiency than equivalent ground installations. The formation-flying part matters because it lets you distribute workloads across multiple satellites while maintaining inter-node communication via laser crosslinks.

NVIDIA’s role is strategic, not just financial. They’re providing the GPU architecture expertise, the CUDA ecosystem, and the software stack that makes orbital compute useful to developers who already build on NVIDIA’s platform. If you can deploy a containerized AI workload to AWS today, the goal is to make deploying it to an orbital node feel just as routine. We’re not there yet. But that’s the trajectory.

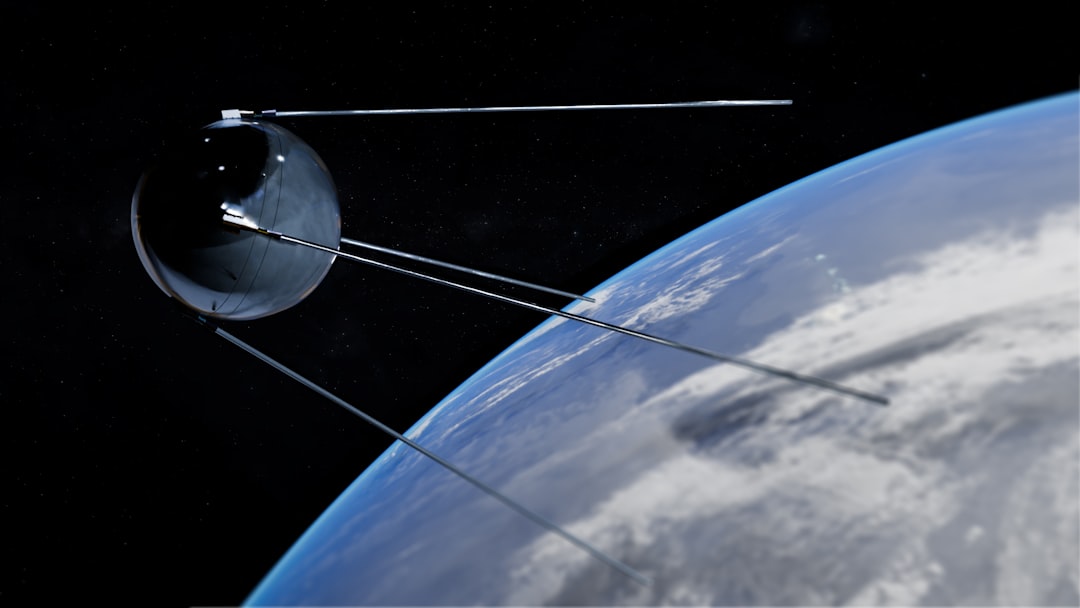

HPE already proved servers can survive in space

This isn’t purely theoretical. HPE and NVIDIA already ran a real computing system on the International Space Station, and it worked for over two years.

Spaceborne Computer-1 (SBC-1) launched in August 2017 aboard SpaceX CRS-12. It was an HPE ProLiant DL360 server, standard commercial hardware, not radiation-hardened. The bet was simple: instead of spending 10-100x more on rad-hard chips, use cheap COTS (commercial off-the-shelf) hardware with software-based error correction. SBC-1 ran for 615 days. That’s almost two years of continuous operation in an environment that should have killed it.

SBC-2 raised the stakes. Launched February 2021 on Northrop Grumman’s Cygnus NG-15, it added an NVIDIA Jetson TX2i GPU module alongside an HPE Edgeline EL4000 edge system. The Jetson TX2i packs 256 CUDA cores, draws just 7.5-15 watts, and was built for industrial environments with a temperature range of -40°C to 85°C. In space, it ran real AI workloads: medical ultrasound processing for crew health monitoring, DNA sequencing analysis, satellite imagery classification for disaster response, and natural language processing experiments.

The real proof of concept wasn’t that the hardware survived. It was that AI and machine learning workloads ran successfully on commodity GPU hardware in microgravity, inside the ISS radiation environment, for an extended period. That changes the math entirely.

The engineering problems that still need solving

Look, I don’t want to oversell this. Orbital data centers have real engineering constraints that make terrestrial facilities look easy by comparison.

Radiation. Cosmic rays and solar particle events flip bits in memory. In LEO, you get roughly 1-10 single event upsets (SEUs) per day per gigabyte of SRAM. The South Atlantic Anomaly is a known hotspot. Total ionizing dose accumulates over time, degrading semiconductors permanently. The ISS receives about 150 millisieverts per year. NVIDIA Jetson modules aren’t radiation-hardened, they’re standard TSMC silicon. SBC-2 survived by using ECC memory, software watchdogs, and checkpoint-restart mechanisms. But scaling this to thousands of GPUs in orbit? That’s a different challenge.

Thermal management. Yes, space is cold. But there’s no air convection in a vacuum. Your only options are conduction to heat pipes and radiation via large panels. Fans don’t work. The ISS solves this with active liquid cooling loops, which is why SBC-2 could run standard hardware. A standalone satellite needs its own thermal design from scratch.

Connectivity. This is the real bottleneck. LEO satellites have contact windows of 10-15 minutes per ground station pass. Even with a network of ground stations, you’re dealing with intermittent connectivity. Multi-terabit laser crosslinks between satellites are possible but haven’t been deployed at data-center scale. Latency in LEO is actually decent (5-40 ms per hop), but bandwidth is the constraint.

Power budget. A 6U cubesat generates 20-40 watts from its solar panels. A 12U cubesat might manage 60-100 watts. A single NVIDIA H100 GPU draws 700 watts. Even a Jetson Orin at 15-60 watts eats a significant chunk of a small satellite’s total power. Serious orbital compute requires large solar arrays, which means larger, heavier, more expensive satellites.

None of these are unsolvable. But each one adds cost and complexity. The question isn’t whether it’s possible. It’s whether it’s cheaper than just building more nuclear-powered data centers on Earth.

Which AI workloads actually make sense in orbit

Not everything belongs in space. Training a 1-trillion-parameter model requires tightly coupled GPU clusters with nanosecond-level interconnect latency. That’s staying on Earth for the foreseeable future.

But plenty of AI work is delay-tolerant. And that’s where orbital compute gets interesting.

Satellite imagery preprocessing. Earth observation satellites generate 1-10 TB of raw data daily. Most of it is useless, cloud-covered frames, ocean with nothing interesting happening. An on-board AI model that filters and compresses before downlinking can reduce data volume by 70-80%. That’s not a nice-to-have. At current downlink bandwidth constraints, it’s the only way to scale.

Nightly batch inference. Model inference that doesn’t need real-time response is perfect for orbital compute. Think overnight analytics, video transcription, document classification. Ship the data up, process it during orbital passes, downlink the results.

Remote region serving. Orbital compute nodes with ground station downlinks can serve AI inference to regions with poor terrestrial infrastructure. A farmer in rural sub-Saharan Africa doesn’t need a hyperscale data center in Virginia. A LEO satellite passing overhead could serve the same model with lower effective latency than routing through undersea cables.

Autonomous navigation. Deep space missions face communication delays of 4-24 minutes to Mars, 1.3 seconds to the Moon. Real-time ground control is impossible. Spacecraft need on-board AI for terrain classification, hazard avoidance, and autonomous decision-making. NASA’s Mars 2020 Perseverance rover was trained on NVIDIA GPUs on the ground, but the actual rover runs a radiation-hardened BAE RAD750 processor. Future missions will need much more compute than that.

The industries driving demand for data processing power keep growing. Online platforms with real-time gaming, financial markets, autonomous vehicles, precision agriculture, all of them need faster inference at lower latency. When terrestrial capacity hits a wall, orbital capacity starts looking less exotic and more inevitable.

Who else is building compute infrastructure for space

NVIDIA and Starcloud aren’t operating in isolation. A growing ecosystem of companies is attacking different pieces of the orbital compute stack.

Planet Labs operates 200+ Dove satellites and 21 SkySats for Earth observation. They process most data on NVIDIA GPU clusters on the ground but have explored on-board AI for data triage. Satellogic builds sub-meter resolution observation satellites and has evaluated NVIDIA Jetson hardware for future on-board processing. Spire Global runs 100+ cubesats for weather and maritime tracking, using on-board AI for ship detection and GNSS data processing.

On the compute hardware side, Unibap (Sweden) builds SpaceCloud computers with AMD GPUs for satellite processing, already deployed on D-Orbit’s ION carrier. KP Labs (Poland) launched Intuition-1, a hyperspectral satellite with custom neural network hardware. Ubotica (now part of Redwire Space) developed CogniSAT using Intel Movidius chips.

And then there’s the ground infrastructure. NASA’s Frontier Development Lab uses NVIDIA DGX systems for asteroid detection, space weather forecasting, and lunar mapping. NASA Ames runs cloud-scale GPU clusters with NVIDIA A100 and H100 chips for climate modeling and aerodynamics simulation. The processing demand exists. The question is where to put it.

What this means for the future of cloud computing

Here’s the shift most people haven’t priced in yet. Cloud infrastructure as we know it, the AWS regions and Azure zones and GCP pods, is fundamentally constrained by geography. You need land, water, power, and fiber. All four are getting more expensive and harder to secure.

Orbital compute doesn’t replace terrestrial cloud. Not in our lifetimes. But it extends it. The same way CDN edge nodes extended centralized data centers to reduce latency, orbital nodes could extend cloud infrastructure to reduce energy and cooling costs for specific workload types.

NVIDIA and its partners are talking about limited public computing access by 2026-2027 if demonstrations continue on schedule. That’s aggressive. Space timelines slip. Rockets fail. Hardware surprises you in ways that ground testing can’t predict. I’d bet on 2028-2030 for anything resembling a usable commercial service.

But the trajectory is clear. AI compute demand is growing faster than terrestrial power infrastructure can expand. Nuclear is part of the answer. Geothermal is part of the answer. And orbit might be part of the answer too.

The companies that figure out reliable, cost-effective data processing at scale in orbit won’t just be building data centers in a new location. They’ll be creating an entirely new tier of computing infrastructure. One that doesn’t compete with humans for land, water, or electricity.

That’s not science fiction anymore. There’s a GPU in orbit right now. And NVIDIA is betting it won’t be the last one.

Frequently Asked Questions

Has NVIDIA actually sent servers to space?

Yes. HPE’s Spaceborne Computer-2 launched in February 2021 with an NVIDIA Jetson TX2i GPU module aboard the International Space Station. It ran AI workloads including medical imaging, DNA sequencing, and satellite image classification for over two years. Starcloud, backed by NVIDIA’s Inception program, has also launched a test satellite with NVIDIA GPU hardware.

How does cooling work for servers in space?

Space has no air, so convective cooling (fans) doesn’t work. Instead, orbital servers use heat pipes to conduct heat away from chips, then radiate it into space through large radiator panels. The vacuum of space actually helps because heat can radiate directly into the cosmic background at near-absolute zero (2.7 Kelvin). The ISS uses active liquid cooling loops, which is how SBC-2 ran on standard commercial hardware for over two years.

What AI workloads can run in orbit?

Delay-tolerant workloads are the best fit: satellite image preprocessing (reducing downlink data by 70-80%), batch inference jobs, remote region AI serving, and autonomous spacecraft navigation. Real-time training of massive models still requires tightly coupled ground-based GPU clusters with nanosecond interconnect latency, so that stays on Earth.

Won’t radiation destroy the hardware?

Radiation is a real concern. LEO satellites experience 1-10 single event upsets (SEUs) per day per gigabyte of SRAM. NVIDIA GPUs aren’t radiation-hardened. HPE’s approach with SBC-2 used ECC memory, software watchdogs, triple modular redundancy, and checkpoint-restart to handle errors in software rather than hardware. This COTS approach costs 10-100x less than traditional radiation-hardened chips.

When will orbital data centers be available commercially?

NVIDIA’s partners are targeting limited public access by 2026-2027 if testing milestones hold. Realistically, expect 2028-2030 for anything resembling a usable commercial service. Space timelines slip, and scaling from one test satellite to a compute constellation involves significant engineering challenges in power, thermal management, and connectivity.

How much power does a satellite need to run AI hardware?

An NVIDIA H100 GPU draws 700 watts. A Jetson Orin module draws 15-60 watts. A small 6U cubesat generates only 20-40 watts from solar panels. Serious orbital compute requires large solar arrays on bigger satellites, or formation-flying constellations that distribute power generation across multiple nodes.

Will orbital computing replace cloud data centers?

No. Orbital compute extends terrestrial cloud, it doesn’t replace it. Think of it like CDN edge nodes extending centralized data centers. Orbital nodes handle specific delay-tolerant workloads where energy efficiency and cooling costs matter more than microsecond latency. Traditional cloud infrastructure handles everything else.

Which companies are building space computing infrastructure?

Beyond NVIDIA and Starcloud, key players include HPE (Spaceborne Computer), Planet Labs (200+ Earth observation satellites), Satellogic (sub-meter imaging), Spire Global (100+ cubesats), Unibap (SpaceCloud with AMD GPUs), KP Labs (Intuition-1 satellite), and Ubotica/Redwire Space (CogniSAT). NASA’s Frontier Development Lab also uses NVIDIA DGX systems for space science AI research.